2026-03-24 コロンビア大学

<関連情報>

- https://www.engineering.columbia.edu/about/news/can-ai-understand-literature-columbia-researchers-put-it-test

- https://arxiv.org/abs/2403.01061

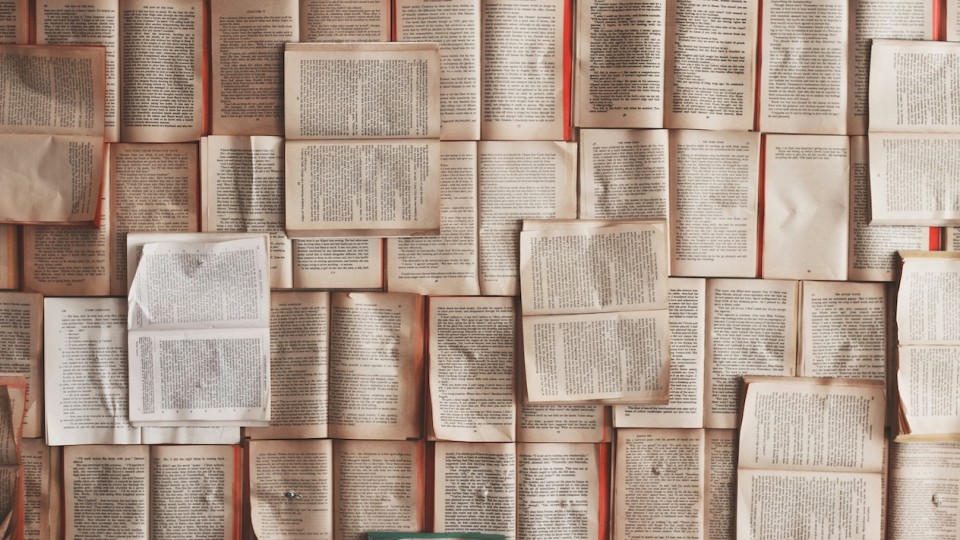

サブテキストの読解:作家による短編小説要約における大規模言語モデルの評価 Reading Subtext: Evaluating Large Language Models on Short Story Summarization with Writers

Melanie Subbiah, Sean Zhang, Lydia B. Chilton, Kathleen McKeown

arXiv last revised 11 Jul 2024 (this version, v3)

DOI:https://doi.org/10.48550/arXiv.2403.01061

Abstract

We evaluate recent Large Language Models (LLMs) on the challenging task of summarizing short stories, which can be lengthy, and include nuanced subtext or scrambled timelines. Importantly, we work directly with authors to ensure that the stories have not been shared online (and therefore are unseen by the models), and to obtain informed evaluations of summary quality using judgments from the authors themselves. Through quantitative and qualitative analysis grounded in narrative theory, we compare GPT-4, Claude-2.1, and LLama-2-70B. We find that all three models make faithfulness mistakes in over 50% of summaries and struggle with specificity and interpretation of difficult subtext. We additionally demonstrate that LLM ratings and other automatic metrics for summary quality do not correlate well with the quality ratings from the writers.