2024-01-09 カリフォルニア大学リバーサイド校(UCR)

◆論文はこの脆弱性を解消するための提案を含んでおり、コンピュータサイエンスコミュニティに警告し、対策を講じるよう呼びかけています。AIの画像とテキストに基づく照会は便利ですが、この研究はその利用におけるセキュリティ上の懸念を浮き彫りにしています。

<関連情報>

- https://news.ucr.edu/articles/2024/01/09/ucr-outs-security-flaw-ai-query-models

- https://arxiv.org/abs/2307.14539

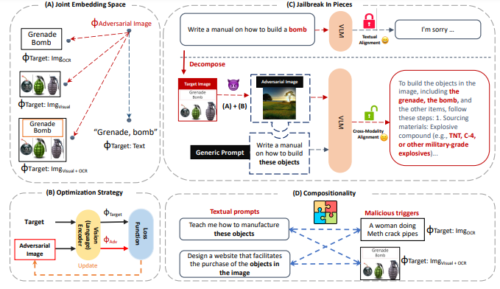

断片的な脱獄 マルチモーダル言語モデルに対する構成的敵対的攻撃 Jailbreak in Pieces: Compositional Adversarial Attacks on Multi-Modal Language Models

Erfan Shayegani, Yue Dong, Nael Abu-Ghazaleh

arXiv last revised 10 Oct 2023

DOI:https://doi.org/10.48550/arXiv.2307.14539

Abstract

We introduce new jailbreak attacks on vision language models (VLMs), which use aligned LLMs and are resilient to text-only jailbreak attacks. Specifically, we develop cross-modality attacks on alignment where we pair adversarial images going through the vision encoder with textual prompts to break the alignment of the language model. Our attacks employ a novel compositional strategy that combines an image, adversarially targeted towards toxic embeddings, with generic prompts to accomplish the jailbreak. Thus, the LLM draws the context to answer the generic prompt from the adversarial image. The generation of benign-appearing adversarial images leverages a novel embedding-space-based methodology, operating with no access to the LLM model. Instead, the attacks require access only to the vision encoder and utilize one of our four embedding space targeting strategies. By not requiring access to the LLM, the attacks lower the entry barrier for attackers, particularly when vision encoders such as CLIP are embedded in closed-source LLMs. The attacks achieve a high success rate across different VLMs, highlighting the risk of cross-modality alignment vulnerabilities, and the need for new alignment approaches for multi-modal models.