2023-06-14 カーネギーメロン大学

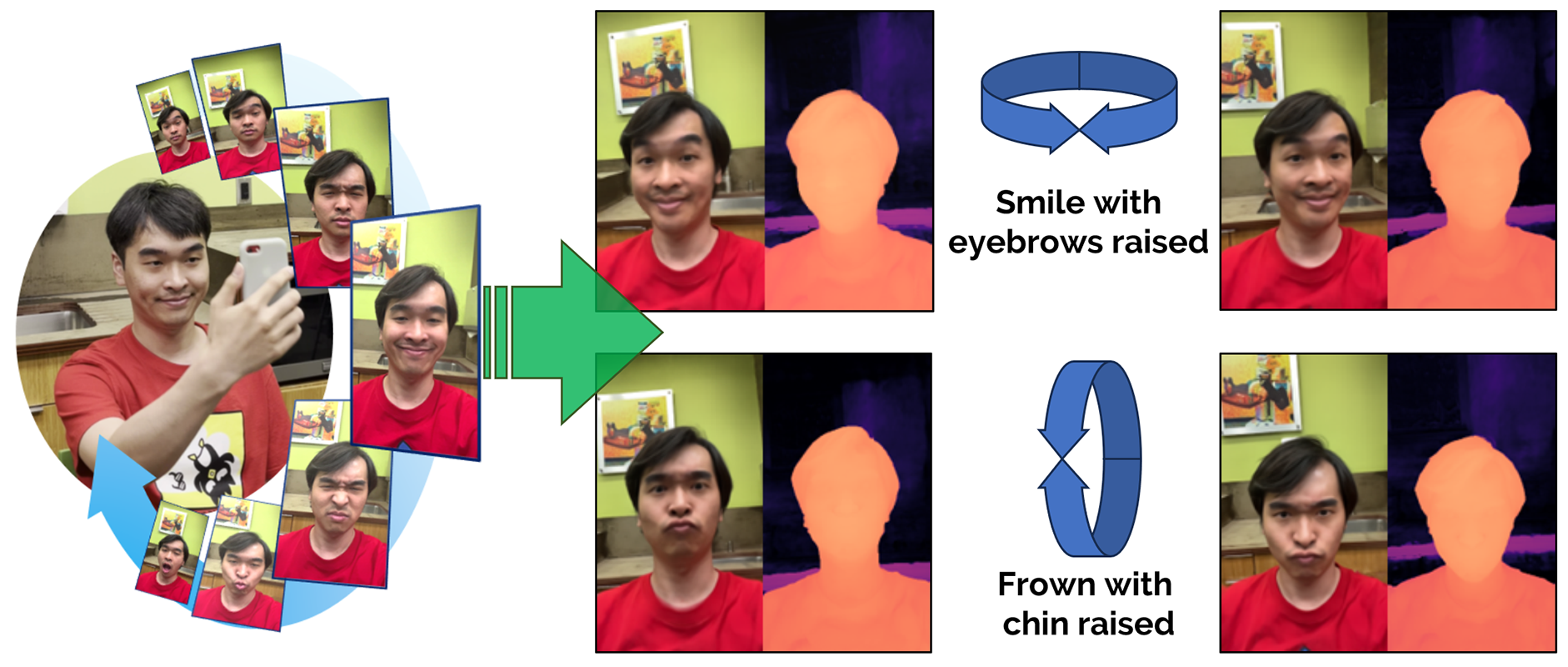

Dynamic Light Field Network (DyLiN) accommodates dynamic scene deformations, such as in avatar animation, where facial expressions can be used as controllable input attributes.

Dynamic Light Field Network (DyLiN) accommodates dynamic scene deformations, such as in avatar animation, where facial expressions can be used as controllable input attributes.

◆研究では、合成および実世界のデータセットでDyLiNのテストが行われ、高速性と視覚的な忠実度の面で従来手法に比べて優れたパフォーマンスを示しました。さらに、CoDyLiNと呼ばれる手法も導入され、属性入力を制御可能にすることでさらなる改善がなされました。

◆この研究は、3Dモデリングとレンダリングの分野で重要な進歩を示し、仮想シミュレーション、拡張現実、ゲーム、アニメーションなど、変形可能な3Dモデルに関連する産業に革新をもたらす可能性があります。

◆DyLiNとCoDyLiNは、2D画像を基にしてリアルな3D空間内の動きや変化を正確に再現し、リアルな表情の転送など、様々な応用が期待されています。

<関連情報>

DyLiN、ライトフィールドネットワークをダイナミックにする

DyLiN: Making Light Field Networks Dynamic

Heng Yu, Joel Julin, Zoltan A. Milacski, Koichiro Niinuma, Laszlo A. Jeni

arXiv Submitted on 24 Mar 2023

DOI:https://doi.org/10.48550/arXiv.2303.14243

Light Field Networks, the re-formulations of radiance fields to oriented rays, are magnitudes faster than their coordinate network counterparts, and provide higher fidelity with respect to representing 3D structures from 2D observations. They would be well suited for generic scene representation and manipulation, but suffer from one problem: they are limited to holistic and static scenes. In this paper, we propose the Dynamic Light Field Network (DyLiN) method that can handle non-rigid deformations, including topological changes. We learn a deformation field from input rays to canonical rays, and lift them into a higher dimensional space to handle discontinuities. We further introduce CoDyLiN, which augments DyLiN with controllable attribute inputs. We train both models via knowledge distillation from pretrained dynamic radiance fields. We evaluated DyLiN using both synthetic and real world datasets that include various non-rigid deformations. DyLiN qualitatively outperformed and quantitatively matched state-of-the-art methods in terms of visual fidelity, while being 25 – 71x computationally faster. We also tested CoDyLiN on attribute annotated data and it surpassed its teacher model. Project page: this https URL .