2024-02-28 スイス連邦工科大学ローザンヌ校(EPFL)

©EPFL/iStock photos (da-kuk)

©EPFL/iStock photos (da-kuk)

<関連情報>

- https://actu.epfl.ch/news/charting-new-paths-in-ai-learning/

- https://www.pnas.org/doi/10.1073/pnas.2316301121

確率的勾配降下の異なる領域について On the different regimes of stochastic gradient descent

Antonio Sclocchi and Matthieu Wyart

Proceedings of the National Academy of Sciences Published:February 20, 2024

DOI:https://doi.org/10.1073/pnas.2316301121

Significance

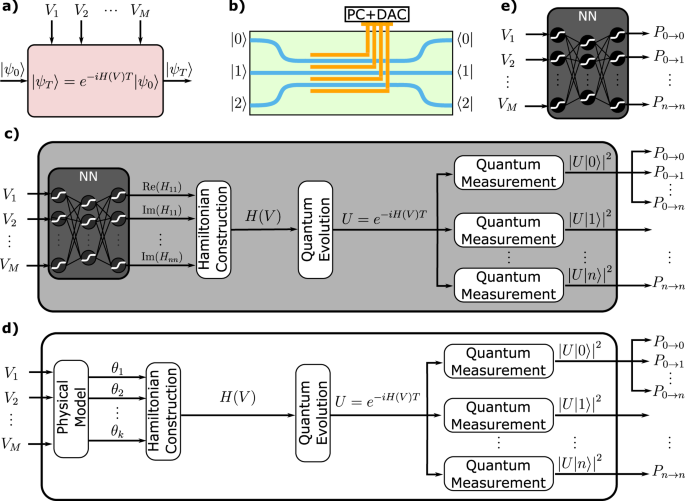

The success of deep learning contrasts with its limited understanding. One example is stochastic gradient descent, the main algorithm used to train neural networks. It depends on hyperparameters whose choice has little theoretical guidance and relies on expensive trial-and-error procedures. In this work, we clarify how these hyperparameters affect the training dynamics of neural networks, leading to a phase diagram with distinct dynamical regimes. Our results explain the surprising observation that these hyperparameters strongly depend on the number of data available. We show that this dependence is controlled by the difficulty of the task being learned.

Abstract

Modern deep networks are trained with stochastic gradient descent (SGD) whose key hyperparameters are the number of data considered at each step or batch size B, and the step size or learning rate η. For small B and large η, SGD corresponds to a stochastic evolution of the parameters, whose noise amplitude is governed by the “temperature” T≡η/B. Yet this description is observed to break down for sufficiently large batches B≥B∗, or simplifies to gradient descent (GD) when the temperature is sufficiently small. Understanding where these cross-overs take place remains a central challenge. Here, we resolve these questions for a teacher-student perceptron classification model and show empirically that our key predictions still apply to deep networks. Specifically, we obtain a phase diagram in the B-η plane that separates three dynamical phases: i) a noise-dominated SGD governed by temperature, ii) a large-first-step-dominated SGD and iii) GD. These different phases also correspond to different regimes of generalization error. Remarkably, our analysis reveals that the batch size B∗ separating regimes (i) and (ii) scale with the size P of the training set, with an exponent that characterizes the hardness of the classification problem.