2026-03-13 ブラウン大学

<関連情報>

LEGS-POMDP:部分的にしか観測できない環境における言語とジェスチャーによる物体検索 LEGS-POMDP: Language and Gesture-Guided Object Search in Partially Observable Environments

Ivy He, Stefanie Tellex, Jason Xinyu Liu

ACM/IEEE International Conference on Human-Robot Interaction (HRI) 2026 March 17

Abstract

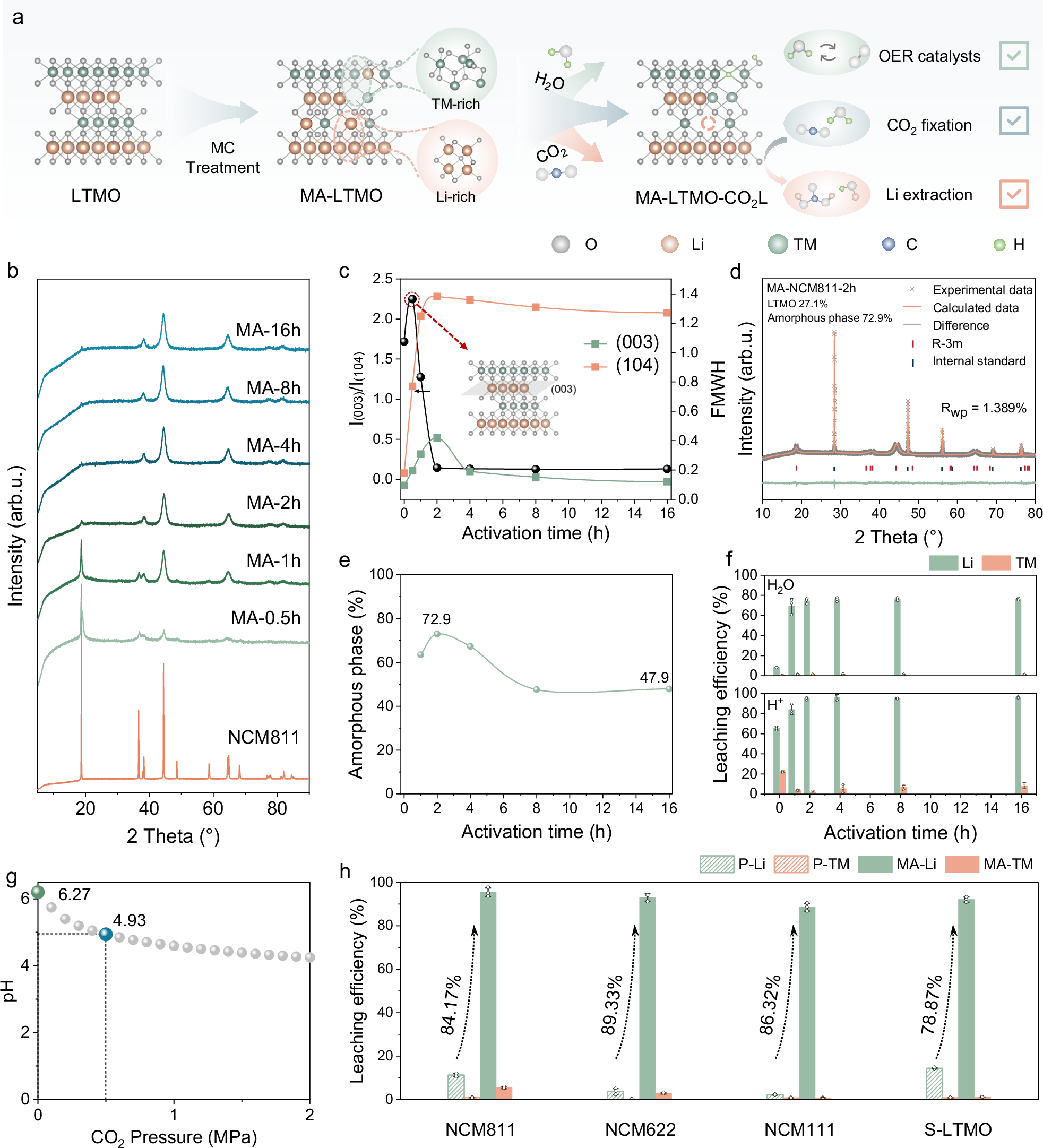

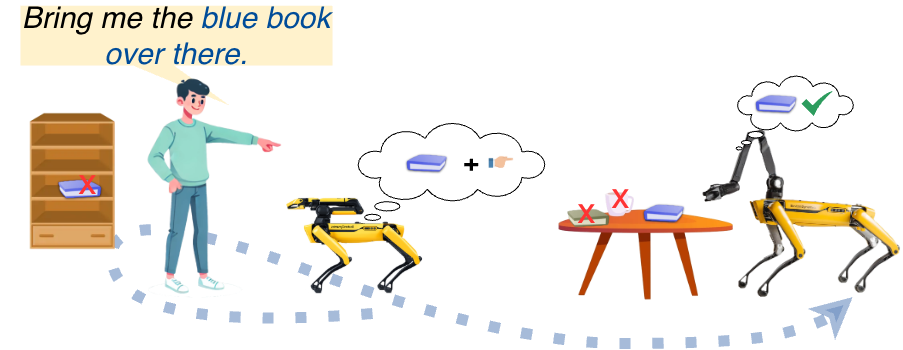

To assist humans in open-world environments, robots must accurately interpret ambiguous instructions to locate desired objects. Foundation model-based approaches excel at reference expression grounding and multimodal instruction understanding, but lack a principled mechanism to model uncertainty of long-horizon tasks. Conversely, Partially Observable Markov Decision Processes (POMDPs) provide a systematic framework for planning under uncertainty but are typically limited in modalities and environment assumptions.

To achieve the best of both worlds, we introduce LanguagE and GeSture-Guided Object Search in Partially Observable Environments (LEGS-POMDP), a modular POMDP system that integrates language, gesture, and visual observations for open-world object search. Unlike prior work, LEGS-POMDP explicitly models two sources of partial observability: uncertainty over the target object’s identity and its spatial location.

Simulation results show that multimodal fusion significantly outperforms unimodal baselines, achieving an average success rate of 89% ± 7% across challenging environments and object categories. We demonstrate the full system on a Boston Dynamics Spot quadruped mobile manipulator, where real-world experiments qualitatively validate robust multimodal perception and uncertainty reduction under ambiguous human instructions.