2024-10-09 カリフォルニア工科大学(Caltech)

<関連情報>

- https://www.caltech.edu/about/news/new-algorithm-enables-neural-networks-to-learn-continuously

- https://www.nature.com/articles/s42256-024-00902-x

機能不変パスのトラバースによる柔軟な機械学習システムのエンジニアリング Engineering flexible machine learning systems by traversing functionally invariant paths

Guruprasad Raghavan,Bahey Tharwat,Surya Narayanan Hari,Dhruvil Satani,Rex Liu & Matt Thomson

Nature Machine Intelligence Published:03 October 2024

DOI:https://doi.org/10.1038/s42256-024-00902-x

Abstract

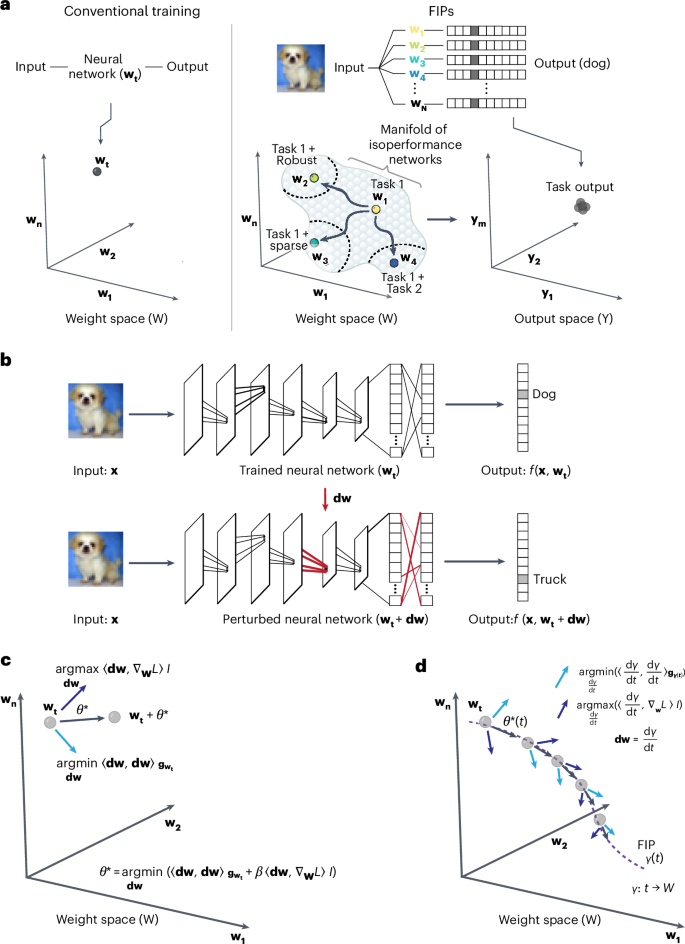

Contemporary machine learning algorithms train artificial neural networks by setting network weights to a single optimized configuration through gradient descent on task-specific training data. The resulting networks can achieve human-level performance on natural language processing, image analysis and agent-based tasks, but lack the flexibility and robustness characteristic of human intelligence. Here we introduce a differential geometry framework—functionally invariant paths—that provides flexible and continuous adaptation of trained neural networks so that secondary tasks can be achieved beyond the main machine learning goal, including increased network sparsification and adversarial robustness. We formulate the weight space of a neural network as a curved Riemannian manifold equipped with a metric tensor whose spectrum defines low-rank subspaces in weight space that accommodate network adaptation without loss of prior knowledge. We formalize adaptation as movement along a geodesic path in weight space while searching for networks that accommodate secondary objectives. With modest computational resources, the functionally invariant path algorithm achieves performance comparable with or exceeding state-of-the-art methods including low-rank adaptation on continual learning, sparsification and adversarial robustness tasks for large language models (bidirectional encoder representations from transformers), vision transformers (ViT and DeIT) and convolutional neural networks.