2024-06-13 ノースカロライナ州立大学(NCState)

◆研究チームは、BEVFormer、BEVFormer DFA3D、PETRという3つの主要なビジョントランスフォーマーとMvAConを組み合わせてテストし、物体の位置、速度、方向の特定において顕著な性能向上を確認しました。計算資源の増加もほとんどなく、次のステップとして、さらに多くのベンチマークデータセットや実際の自動運転車のビデオ入力に対するテストが予定されています。MvAConが引き続き高性能を示せば、広範な採用が期待されます。

<関連情報>

- https://news.ncsu.edu/2024/06/improved-3d-mapping-multiple-cameras/

- https://arxiv.org/abs/2405.12200

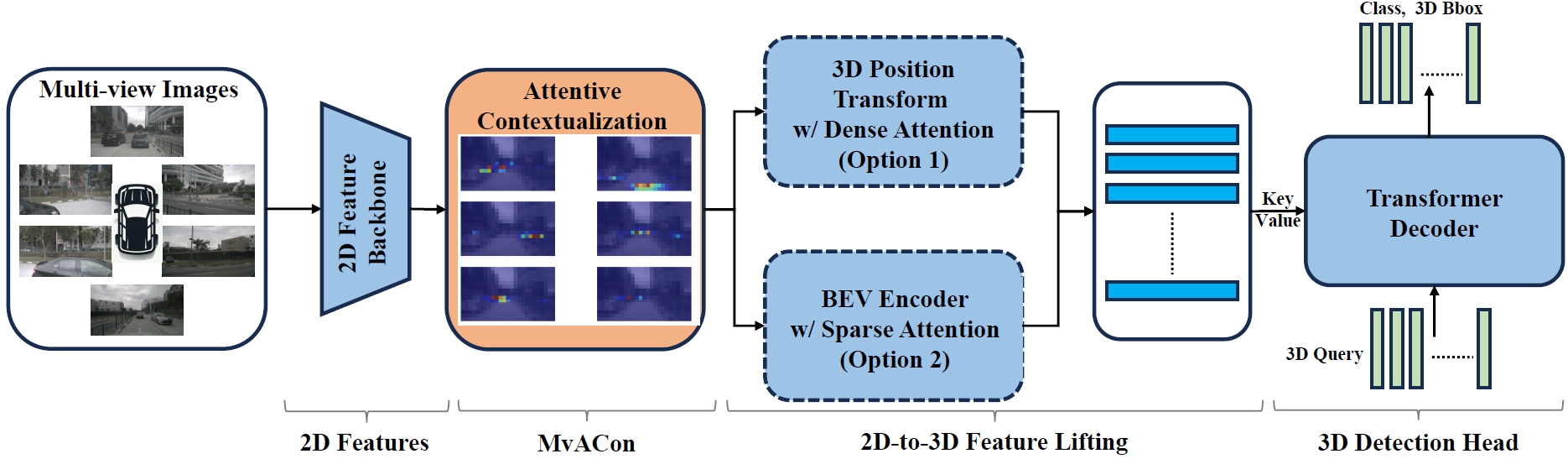

多視点3D物体検出のための多視点アテンションコンテクスト化 Multi-View Attentive Contextualization for Multi-View 3D Object Detection

Xianpeng Liu, Ce Zheng, Ming Qian, Nan Xue, Chen Chen, Zhebin Zhang, Chen Li, Tianfu Wu

arXiv Submitted on 20 May 2024

DOI:https://doi.org/10.48550/arXiv.2405.12200

Abstract

We present Multi-View Attentive Contextualization (MvACon), a simple yet effective method for improving 2D-to-3D feature lifting in query-based multi-view 3D (MV3D) object detection. Despite remarkable progress witnessed in the field of query-based MV3D object detection, prior art often suffers from either the lack of exploiting high-resolution 2D features in dense attention-based lifting, due to high computational costs, or from insufficiently dense grounding of 3D queries to multi-scale 2D features in sparse attention-based lifting. Our proposed MvACon hits the two birds with one stone using a representationally dense yet computationally sparse attentive feature contextualization scheme that is agnostic to specific 2D-to-3D feature lifting approaches. In experiments, the proposed MvACon is thoroughly tested on the nuScenes benchmark, using both the BEVFormer and its recent 3D deformable attention (DFA3D) variant, as well as the PETR, showing consistent detection performance improvement, especially in enhancing performance in location, orientation, and velocity prediction. It is also tested on the Waymo-mini benchmark using BEVFormer with similar improvement. We qualitatively and quantitatively show that global cluster-based contexts effectively encode dense scene-level contexts for MV3D object detection. The promising results of our proposed MvACon reinforces the adage in computer vision — “(contextualized) feature matters”.